Overview

This guide demonstrates how to develop an app to capture data using Workflow Input. This example focuses on scanning an identification document - the similar procedure applies to other OCR features (License Plate, Vehicle Identification Number (VIN), Tire Identification Number (TIN), Meter Reading), as well as Free-Form Image Capture and Document Capture.

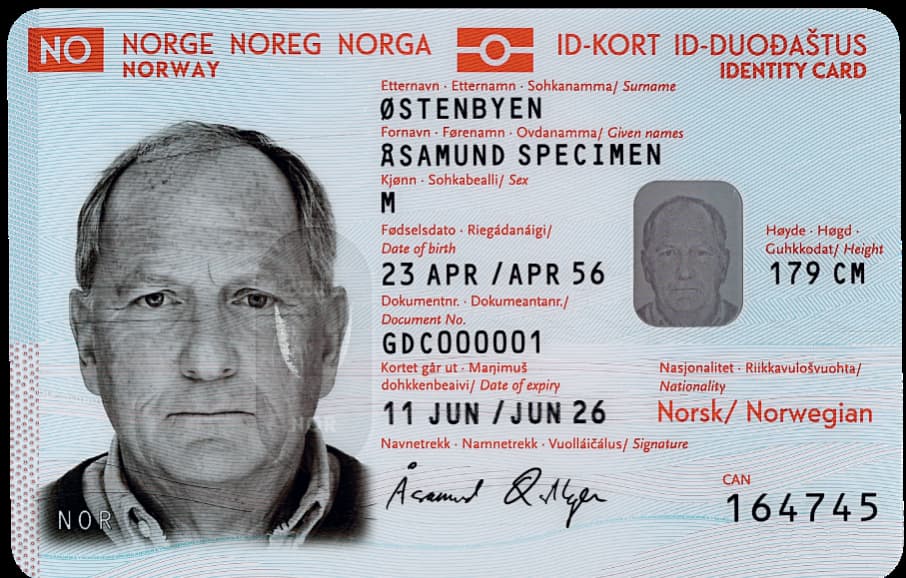

Sample identification documents used:

|

|

|

| Sample driver license from US (Pennsylvania) and Norway |

Video providing step-by-step instructions on how to develop an app to capture data from an identification document:

Download source code files.

Requirements

- DataWedge version 11.2 or higher (find the version)

- Scanning framework 32.0.3.6 or higher (find the version)

- Android 11 or higher

- Built-in camera on mobile computer

- Mobility DNA OCR Wedge license - required for each individual OCR feature:

- Mobility DNA License Plate OCR Wedge License

- Mobility DNA Identification Documents OCR Wedge License

- Mobility DNA Vehicle Identification Number OCR Wedge License

- Mobility DNA Tire Identification Number OCR Wedge License

- Mobility DNA Meter Reading OCR Wedge License

Package Visibility

IMPORTANT: Due to package visibility restrictions imposed by Android 11 (API 30), DataWedge apps targeting Android 11 or later must include the following <queries> element in the AndroidManifest.xml file:

<queries>

<package android:name="com.symbol.datawedge" />

</queries>

Steps to Capture Data

In summary, the steps to capture data using Workflow Input are:

- Create a DataWedge profile and associate it to the application.

- Configure the application to receive scan results.

- Extract string data from the intent.

- Extract image data from the intent.

These steps are explained in detail in the following subsections.

1. Create a DataWedge profile and associate it to the application

Create a profile in DataWedge and associate the profile to the app with the following settings:

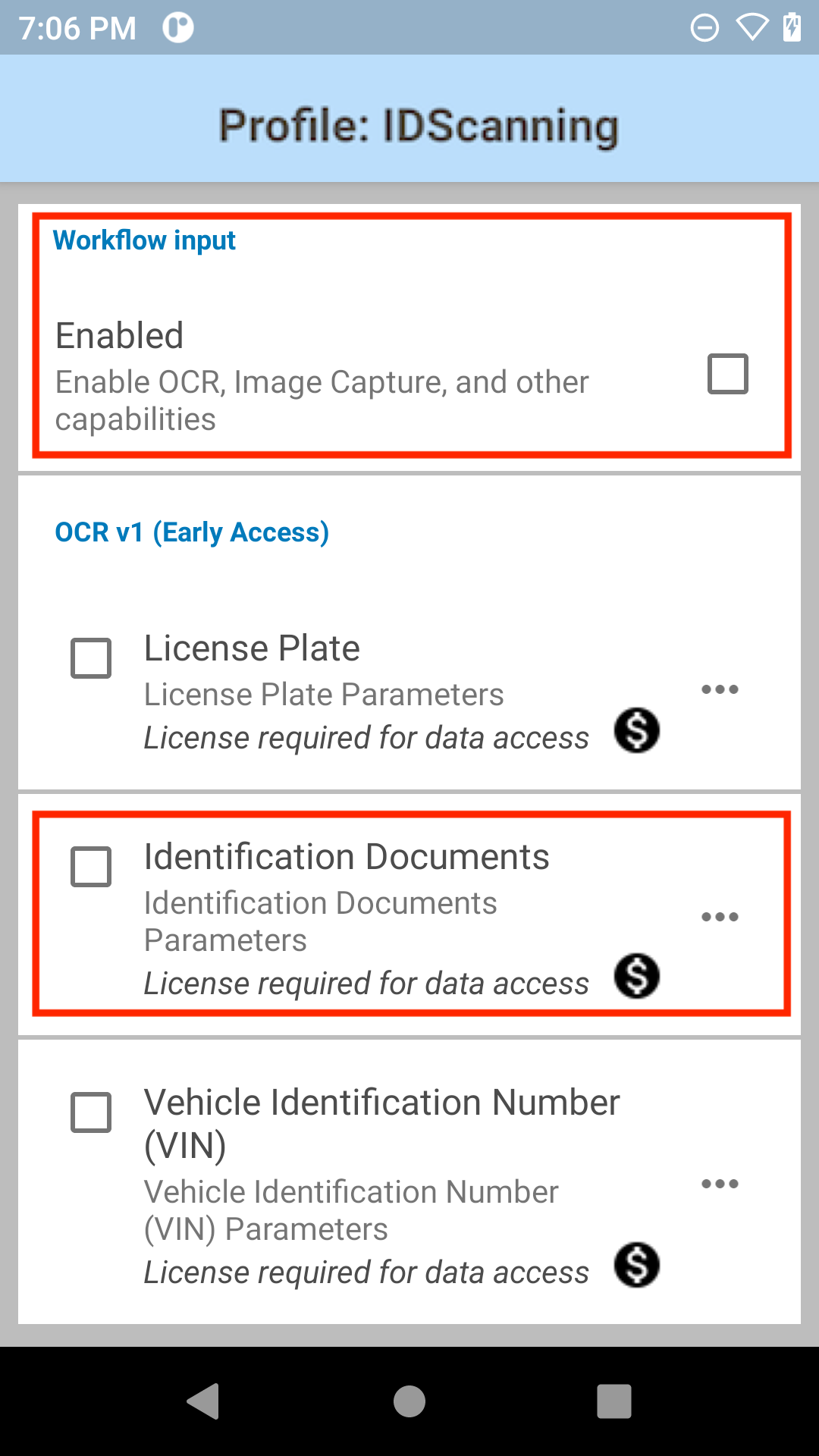

A. Enable Workflow Input.

B. Under OCR, enable the desired OCR feature(s): License Plate, Identification Document, Vehicle Identification Number (VIN), Tire Identification Number (TIN), Meter Reading. In this case, enable Identification Documents.

Enable Workflow Input and Identification Documents

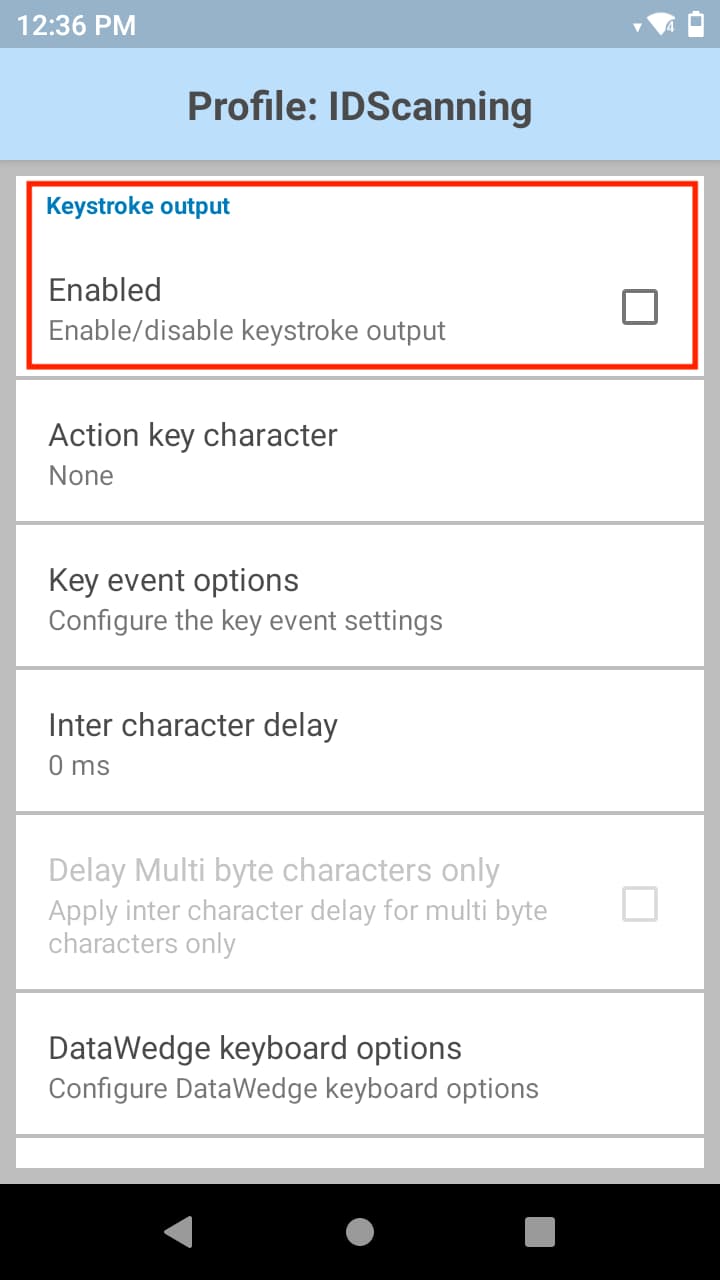

C. If keystroke data is not intended to be used, disable Keystroke Output.

Disable Keystroke Output

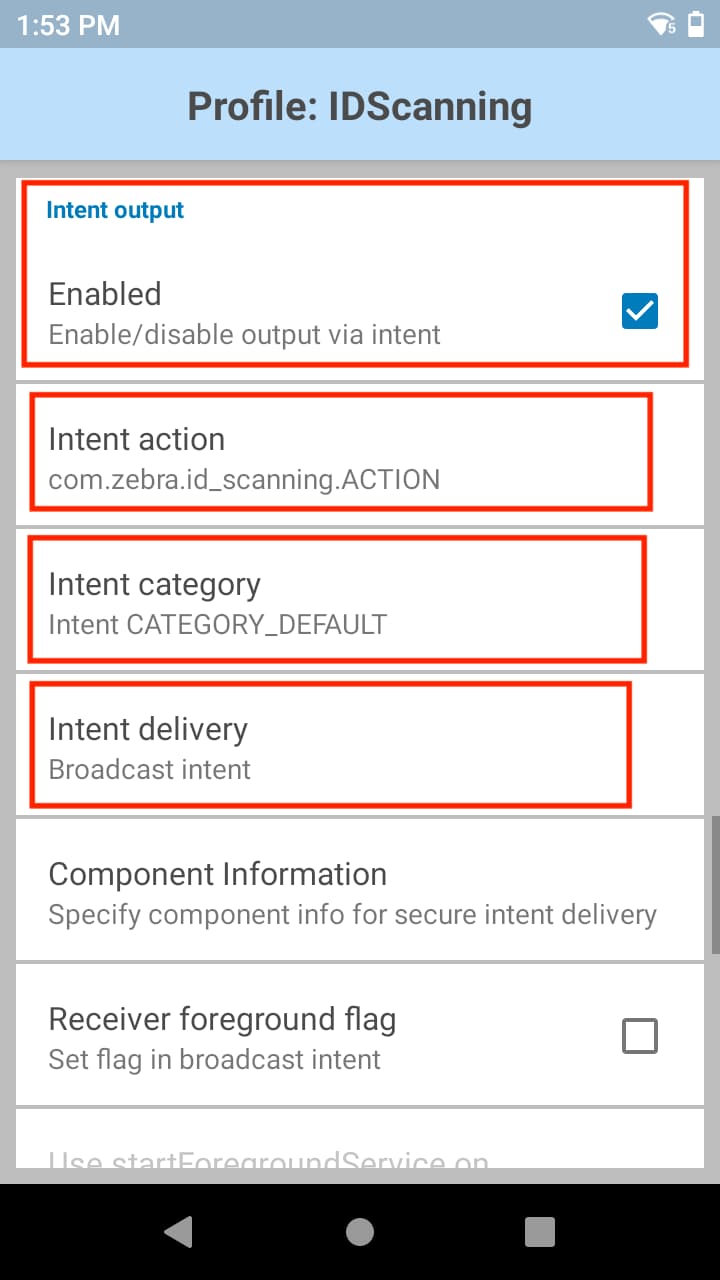

D. Enable Intent Output and perform the following:

Specify the Intent Action and Intent Category used to register the broadcast receiver in the app from step 1. In this example:

• Intent Action: com.zebra.id_scanning.ACTION

• Intent Category: Intent.CATEGORY_DEFAULTSelect the Intent Delivery mechanism. In this example, select Broadcast Intent.

Intent Output configurations

2. Configure the application to receive scan results

Create an Android application (e.g. IDScanningApp). Register a broadcast receiver to receive scan results from broadcast intents:

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main2);

IntentFilter intentFilter = new IntentFilter();

intentFilter.addAction("com.zebra.id_scanning.ACTION");

intentFilter.addCategory(Intent.CATEGORY_DEFAULT);

registerReceiver(broadcastReceiver, intentFilter);

}

BroadcastReceiver broadcastReceiver = new BroadcastReceiver() {

@Override

public void onReceive(Context context, Intent intent) {

}

};

3. Extract string data from the intent

To extract String data from the intent, the scan result intent contains a String extra called com.symbol.datawedge.data, in which the data is in JSON array format. Example data for an identification document:

[

{

"group_id":"Uncategorized",

"imageformat":"YUV",

"orientation":"90",

"height":"1080",

"stride":"1920",

"size":"4147198",

"label":"ID card",

"width":"1920",

"uri":"content:\/\/com.symbol.datawedge.decode\/18-2-1",

"data_size":4147198

},

{

"group_id":"Result",

"string_data":"90",

"label":"weight",

"uri":"content:\/\/com.symbol.datawedge.decode\/18-2-2",

"data_size":2

},

{

"group_id":"Result",

"string_data":"M",

"label":"sex",

"uri":"content:\/\/com.symbol.datawedge.decode\/18-2-3",

"data_size":1

},

{

"group_id":"Result",

"string_data":"C91993805",

"label":"documentNumber",

"uri":"content:\/\/com.symbol.datawedge.decode\/18-2-5",

"data_size":9

},

{

"group_id":"Result",

"string_data":"ADAMS",

"label":"lastName",

"uri":"content:\/\/com.symbol.datawedge.decode\/18-2-6",

"data_size":5

}

]

In the broadcast receiver, parse the data from the com.symbol.datawedge.data field into a JSONArray object. Each element of the JSONArray is a JSONObject.

BroadcastReceiver broadcastReceiver = new BroadcastReceiver() {

@Override

public void onReceive(Context context, Intent intent) {

Bundle bundle = intent.getExtras();

String jsonData = bundle.getString("com.symbol.datawedge.data");

try {

JSONArray jsonArray = new JSONArray(jsonData);

}

catch (Exception ex)

{

//Check error

}

}

};

Iterate through the elements of the JSONArray object to extract the data for each field:

- If the JSONObject has a mapping for the name “string_data”, it means this JSONObject contains String data. Use the value mapped by the name “label” to identify the type of string data based on the OCR feature. See OCR Result Output for reference on the field names supported to extract the result data.

- If the JSONObject does not have a mapping for the name “string_data”, it means this JSONObject contains Image data. Proceed with the next step to extract the image data.

4. Extract image data from the intent

Make sure the following permission is added in the application manifest file to access the DataWedge Content Provider:

<uses-permission android:name="com.symbol.datawedge.permission.contentprovider" />

IMPORTANT: DataWedge apps targeting Android 11 or later must include the following <queries> element in the AndroidManifest.xml file due to package visibility restrictions imposed by Android 11 (API 30):

<queries>

<package android:name="com.symbol.datawedge" />

</queries>

Use the value from the “uri” field to get the URI to access the DataWedge Content Provider. Then use a ContentResolver to pass the URI and retrieve a Cursor object. The Cursor object contains two columns:

| Name | Description |

|---|---|

| raw_data | Contains the image data in byte[] format |

| next_data_uri | URI to be used to retrieve the remaining Image data. If there is no remaining image data, this field will be empty. |

Read the raw_data and save it in a ByteArrayOutputStream object. If next_data_uri is not empty, read the raw_data from the URI provided in the next_data_uri column. Continue to read the raw_data field and store the value into the ByteArrayOutputStream object, until the next_data_uri is empty. This can be done using a while loop:

String uri = jsonObject.getString("uri");

Cursor cursor = getContentResolver().query(Uri.parse(uri),null,null,null);

ByteArrayOutputStream baos = new ByteArrayOutputStream();

if(cursor != null)

{

cursor.moveToFirst();

baos.write(cursor.getBlob(cursor.getColumnIndex("raw_data")));

String nextURI = cursor.getString(cursor.getColumnIndex("next_data_uri"));

while (nextURI != null && !nextURI.isEmpty())

{

Cursor cursorNextData = getContentResolver().query(Uri.parse(nextURI),

null,null,null);

if(cursorNextData != null)

{

cursorNextData.moveToFirst();

baos.write(cursorNextData.getBlob(cursorNextData.

getColumnIndex("raw_data")));

nextURI = cursorNextData.getString(cursorNextData.

getColumnIndex("next_data_uri"));

cursorNextData.close();

}

}

cursor.close();

}

When all values are stored for the image data, read the values mapped by the field names supported by image output (e.g. “width”, “height”, etc.) shown in the table below to construct the Bitmap object.

Image Output:

| Field Name | Type | Description |

|---|---|---|

| label | string | Image label name that specifies the type of sample: “License Plate”, “Identification Document”, “VIN”, "TIN", “Meter” |

| width | int | Width of the image in pixels |

| height | int | Height of the image in pixels |

| size | int | Size of the image buffer in pixels |

| stride | int | Width of a single row of pixels of an image |

| imageformat | string | Supported formats: Y8, YUV Note: YUV format must be interpreted as NV12 format. |

| orientation | int | Rotation of the image buffer, in degrees. Values: 0, 90, 180, 270, |

Use getBitmap() method from the ImageProcessing class (provided below) to get the Bitmap object:

int width = 0;

int height = 0;

int stride = 0;

int orientation = 0;

String imageFormat = "";

width = jsonObject.getInt("width");

height = jsonObject.getInt("height");

stride = jsonObject.getInt("stride");

orientation = jsonObject.getInt("orientation");

imageFormat = jsonObject.getString("imageformat");

Bitmap bitmap = ImageProcessing.getInstance().getBitmap(baos.toByteArray(),imageFormat, orientation,stride,width, height);

Class ImageProcessing:

/*

* Copyright (C) 2018-2021 Zebra Technologies Corp

* All rights reserved.

*/

package com.zebra.idscanningapp;

import android.graphics.Bitmap;

import android.graphics.BitmapFactory;

import android.graphics.ImageFormat;

import android.graphics.Matrix;

import android.graphics.Rect;

import android.graphics.YuvImage;

import java.io.ByteArrayOutputStream;

public class ImageProcessing{

private final String IMG_FORMAT_YUV = "YUV";

private final String IMG_FORMAT_Y8 = "Y8";

private static ImageProcessing instance = null;

public static ImageProcessing getInstance() {

if (instance == null) {

instance = new ImageProcessing();

}

return instance;

}

private ImageProcessing() {

//Private Constructor

}

public Bitmap getBitmap(byte[] data, String imageFormat, int orientation, int stride, int width, int height)

{

if(imageFormat.equalsIgnoreCase(IMG_FORMAT_YUV))

{

ByteArrayOutputStream out = new ByteArrayOutputStream();

int uvStride = ((stride + 1)/2)*2; // calculate UV channel stride to support odd strides

YuvImage yuvImage = new YuvImage(data, ImageFormat.NV21, width, height, new int[]{stride, uvStride});

yuvImage.compressToJpeg(new Rect(0, 0, stride, height), 100, out);

yuvImage.getYuvData();

byte[] imageBytes = out.toByteArray();

if(orientation != 0)

{

Matrix matrix = new Matrix();

matrix.postRotate(orientation);

Bitmap bitmap = BitmapFactory.decodeByteArray(imageBytes, 0, imageBytes.length);

return Bitmap.createBitmap(bitmap, 0 , 0, bitmap.getWidth(), bitmap.getHeight(), matrix, true);

}

else

{

return BitmapFactory.decodeByteArray(imageBytes, 0, imageBytes.length);

}

}

else if(imageFormat.equalsIgnoreCase(IMG_FORMAT_Y8))

{

return convertYtoJPG_CPU(data, orientation, stride, height);

}

return null;

}

private Bitmap convertYtoJPG_CPU(byte[] data, int orientation, int stride, int height)

{

int mLength = data.length;

int [] pixels = new int[mLength];

for(int i = 0; i < mLength; i++)

{

int p = data[i] & 0xFF;

pixels[i] = 0xff000000 | p << 16 | p << 8 | p;

}

if(orientation != 0)

{

Matrix matrix = new Matrix();

matrix.postRotate(orientation);

Bitmap bitmap = Bitmap.createBitmap(pixels, stride, height, Bitmap.Config.ARGB_8888);

return Bitmap.createBitmap(bitmap, 0 , 0, bitmap.getWidth(), bitmap.getHeight(), matrix, true);

}

else

{

return Bitmap.createBitmap(pixels, stride, height, Bitmap.Config.ARGB_8888);

}

}

}

OCR Result Output

The scan result intent contains a String extra called com.symbol.datawedge.data from which the data can be extracted. This section provides the Field Names available for each OCR feature, with additional Field Names available specifically for identification documents. See Extract string data from the intent for instructions on usage.

OCR Result

| Field Name | Description |

|---|---|

| string_data | Text data read from OCR-based features: license plate, vehicle identification number, tire identification number, or meter. The exception is identification document, which can return a multitude of Field Names, see the section below. |

| data_size | Length of the data |

Identification Document

The table below displays the data fields that can be read from driver licenses, ID cards and/or insurance cards. Some field names are different for documents based on different geographical regions.

The data returned depends on the content of the identification document. If a Field Name (in the table below) is designated as Mandatory, it may not exist in some identification documents. In those cases, no data would be returned when that Field Name is queried. For example:

- Mexican voter ID (Type C) - date of birth and document number does not exist

- Mexican voter ID (Type G) - document number does not exist

- Australian driving license (NSW) - first name and last name does not exist as separate fields, they are combined into a single field called "name."

Legend:

- O - Optional

- M - Mandatory

| No. | Field Name | Driver License | ID Card | Insurance Card |

|---|---|---|---|---|

| 1 | additionalInformation | N/A | O | N/A |

| 2 | additionalInformation1 | N/A | O | N/A |

| 3 | address | M | N/A | N/A |

| 4 | age | N/A | O | N/A |

| 5 | audit | O | N/A | N/A |

| 6 | authority | O | O | M |

| 7 | cardAccessNumber | N/A | O | N/A |

| 8 | cardNumber | O | N/A | N/A |

| 9 | categories | O | N/A | N/A |

| 10 | citizenship | N/A | O | N/A |

| 11 | cityNumber | N/A | O | N/A |

| 12 | class | O | N/A | N/A |

| 13 | conditions | O | N/A | N/A |

| 14 | country | O | O | N/A |

| 15 | dateOfBirth | M | M | M |

| 16 | dateOfExpiry | O | O | M |

| 17 | dateOfIssue | M | O | N/A |

| 18 | dateOfRegistration | N/A | O | N/A |

| 19 | divisionNumber | N/A | O | N/A |

| 20 | documentDiscriminator | O | N/A | N/A |

| 21 | documentNumber | M | M | M |

| 22 | duplicate | O | N/A | N/A |

| 23 | endorsements | O | N/A | N/A |

| 24 | eyes | O | N/A | N/A |

| 25 | familyName | N/A | O | N/A |

| 26 | fathersName | N/A | O | N/A |

| 27 | firstIssued | O | N/A | N/A |

| 28 | firstName | M | M | M |

| 29 | folio | N/A | O | N/A |

| 30 | fullName | N/A | O | N/A |

| 31 | hair | O | N/A | N/A |

| 32 | height | O | O | N/A |

| 33 | lastName | M | M | M |

| 34 | licenseClass | N/A | O | N/A |

| 35 | licenseType | N/A | O | N/A |

| 36 | municipalityNumber | N/A | O | N/A |

| 37 | name | M | N/A | N/A |

| 38 | nationalId | N/A | O | N/A |

| 39 | nationality | N/A | O | M |

| 40 | office | O | N/A | N/A |

| 41 | parentsGivenName | N/A | O | N/A |

| 42 | parish | O | N/A | N/A |

| 43 | personalNumber | O | O | M |

| 44 | placeAndDateOfBirth | N/A | O | N/A |

| 45 | placeOfBirth | O | O | N/A |

| 46 | previousType | O | N/A | N/A |

| 47 | restrictions | O | N/A | N/A |

| 48 | revoked | O | N/A | N/A |

| 49 | sex | O | O | N/A |

| 50 | stateNumber | N/A | O | N/A |

| 51 | supportNumber | N/A | O | N/A |

| 52 | type | O | N/A | N/A |

| 53 | version | O | N/A | N/A |

| 54 | verticalNumber | O | N/A | N/A |

| 55 | voterId | N/A | O | N/A |

| 56 | weight | O | N/A | N/A |

Related Guides: